We'll pick it up from where the previous article ended to keep this one short. Here's the GitHub repository.

Our Trix editor component looks like this:

@props(['id', 'value', 'name', 'disabled' => false]) <input type="hidden" id="{{ $id }}_input" name="{{ $name }}" value="{{ $value?->toTrixHtml() }}"/><trix-editor id="{{ $id }}" input="{{ $id }}_input" {{ $disabled ? 'disabled' : '' }} {{ $attributes->merge(['class' => 'trix-content rounded-md shadow-sm border-gray-300 focus:border-indigo-300 focus:ring focus:ring-indigo-200 focus:ring-opacity-50']) }} x-data="{ upload(event) { const data = new FormData(); data.append('attachment', event.attachment.file); window.axios.post('/attachments', data, { onUploadProgress(progressEvent) { event.attachment.setUploadProgress( progressEvent.loaded / progressEvent.total * 100 ); }, }).then(({ data }) => { event.attachment.setAttributes({ url: data.image_url, }); }); } }" x-on:trix-attachment-add="upload"></trix-editor>This is listening to the trix-attachment-add event, which is fired by Trix when we attempt to upload a file, then we upload them to a POST /attachments endpoint using axios. From that endpoint's response, we get the image_url field and set that as an attribute in the Trix Attachment.

The route that handles the uploads looks like this:

Route::post('/attachments', function () { request()->validate([ 'attachment' => ['required', 'file'], ]); $path = request()->file('attachment')->store('trix-attachments', 'public'); return [ 'image_url' => Storage::disk('public')->url($path), ];})->middleware(['auth'])->name('attachments.store');We validate that the user is uploading a file and we then store it in a trix-attachments folder inside the public disk. Next, we get the URL to that file and return it back to the user as the image_url JSON field. Simple enough.

Now, let's add the Media Library package:

composer require spatie/laravel-medialibraryphp artisan vendor:publish --provider="Spatie\MediaLibrary\MediaLibraryServiceProvider" --tag="migrations"php artisan vendor:publish --provider="Spatie\MediaLibrary\MediaLibraryServiceProvider" --tag="config"These steps should add the package, publish its database migrations and config file. Make sure you have the required dependencies for Media Library's optimizations installed.

The Media Library package ships with its own model called Media. There are a couple of requirements when using this model, like its expectation to have a model associated to them, which would be a problem for us since we want to allow attachments to be created before the resource itself (think you're creating a post and adding attachments to it). To simplify things, let's add our own Attachment model. Whenever we upload an attachment, we'll associate the Media model to a corresponding Attachment model. That Attachment model will have its association as nullable so we can create them before the resource that will reference it.

We can add our model like this:

php artisan make:model Attachment -mfThe -m flag will create a corresponding migration for us, and the -f flag creates a model factory.

Let's change the created migration to add the fields we want:

Schema::create('attachments', function (Blueprint $table) { $table->id(); $table->nullableMorphs('record'); $table->string('caption')->nullable(); $table->timestamps();});Run the migrations:

php artisan migrateWe're making a record polymorphic relationship because we could potentially have other resources receiving attachments to its rich text fields as well.

Now, let's update the Attachment model to configure it to receive attachments:

namespace App\Models; use Illuminate\Database\Eloquent\Factories\HasFactory;use Illuminate\Database\Eloquent\Model;use Spatie\Image\Manipulations;use Spatie\MediaLibrary\HasMedia;use Spatie\MediaLibrary\InteractsWithMedia;use Spatie\MediaLibrary\MediaCollections\Models\Media; class Attachment extends Model implements HasMedia{ use HasFactory; use InteractsWithMedia; protected $casts = [ 'verified_at' => 'datetime', ]; protected $guarded = []; public function registerMediaConversions(Media $media = null): void { $this ->addMediaConversion('thumb') ->fit(Manipulations::FIT_CROP, 300, 300) ->nonQueued(); } public function record() { return $this->morphTo(); }}Now, let's create a new trait for the models that we want to associate attachments with. We'll call it HasAttachments:

namespace App\Models; trait HasAttachments{ public function syncAttachmentsMeta() { $this->content->attachments() ->filter(fn ($attachment) => $attachment->attachable instanceof Attachment) ->each(function ($attachment) { $attachment->attachable->update([ 'record' => $this, 'caption' => $attachment->node->getAttribute('caption'), ]); }); } public function attachments() { return $this->morphMany(Attachment::class, 'record'); }}We added the attachments relationship to the trait, but also a syncAttachmentsMeta method. That method is supposed to be called after we save the model with attachments (whenever we change the content rich text field). It will scan the document looking for attachments of the model Attachment and update the model meta data syncing with the caption in the rich text document. Although we're only interested in the caption attribute for now, you can see how you could extract other metadata from the document itself.

This reminds me we need to make the Attachment model an attachable as well. Attachables, in the Rich Text Laravel package, are models that have a rich text representation inside the documents. Let's add the contract and trait to it, we'll also override some of its methods, I'll explain in a bit:

namespace App\Models; // Other use statements...use Tonysm\RichTextLaravel\Attachables\Attachable;use Tonysm\RichTextLaravel\Attachables\AttachableContract; class Attachment extends Model implements HasMedia, AttachableContract{ // Other used traits... use Attachable; private $firstMediaCache; public function richTextPreviewable(): bool { return str_starts_with($this->getFirstMedia()->mime_type, 'image/'); } public function richTextFilename(): ?string { return $this->firstMedia()->file_name; } public function richTextFilesize() { return $this->firstMedia()->size; } public function richTextContentType(): string { return $this->firstMedia()->mime_type; } public function richTextRender(array $options = []): string { return view('trix._attachment', [ 'attachment' => $this, 'media' => $this->firstMedia(), 'options' => $options, ])->render(); } public function toTrixContent(): ?string { return null; } public function getPreviewableUrl(string $convertionName = null): string { return $this->firstMedia()->getFullUrl($convertionName); } public function firstMedia() { return $this->firstMediaCache ??= $this->getFirstMedia(); } public function setRecordAttribute($record) { $this->record()->associate($record); }}Alright, let's go over each method:

- richTextPreviewable: returns a boolean that indicates whether the attachment has a preview image associated. In our case, we're checking if the associated media has a content-type starting with

image/; - richTextFilename: returns the file name. Again, we're delegating that to the associated media;

- richTextFilesize: returns the file size in bytes. Which we're delegating that to the associated media;

- richTextContentType: returns the file content type. Also delegated to the associated media;

- richTextRender: returns the rendered HTML to show this attachment to users (not what renders inside Trix, but the actual final version);

- toTrixContent: returns the rendered HTML to rendered the attachment inside the Trix editor (what we show inside Trix);

The firstMedia, getPreviewableUrl, setRecordAttribute are actually custom methods, not needed for the Attachable contract. We're using the getPreviewableUrl method inside the view, which we'll explore shortly. The setRecordAttribute mutator will be used when we create the attachment, which we'll also explore shortly. And the firstMedia method is a helper method that caches the media instance on the current attachment the first time it's used so we avoid doing another database query when the attachable methods are used.

One thing you may have noticed is that we're returning null in the toTrixContent method. That's because Trix already knows how to render file attachments based on the file type for images and files (see here and here), so we don't actually need a custom HTML representation here. However, we're adding a custom view for the Attachment model for the final render because we cannot use the same template as remote images use (the ones that ship with the package) since some of the APIs changed.

The trix._attachment Blade template should look something like this:

<figure class="attachment attachment--{{ $attachment->richTextPreviewable() ? 'preview' : 'file' }} attachment--{{ $media->extension }}"> @if ($attachment->richTextPreviewable()) <img src="{{ $attachment->getPreviewableUrl() }}" /> @endif <figcaption class="attachment__caption"> @if ($attachment->caption) {{ $attachment->caption }} @else <span class="attachment__name">{{ $media->filename }}</span> <span class="attachment__size">{{ $media->humanReadableSize }}</span> @endif </figcaption></figure>That should be it. Now, let's change the upload endpoint to also create the Attachment model and associate the uploaded file as its media:

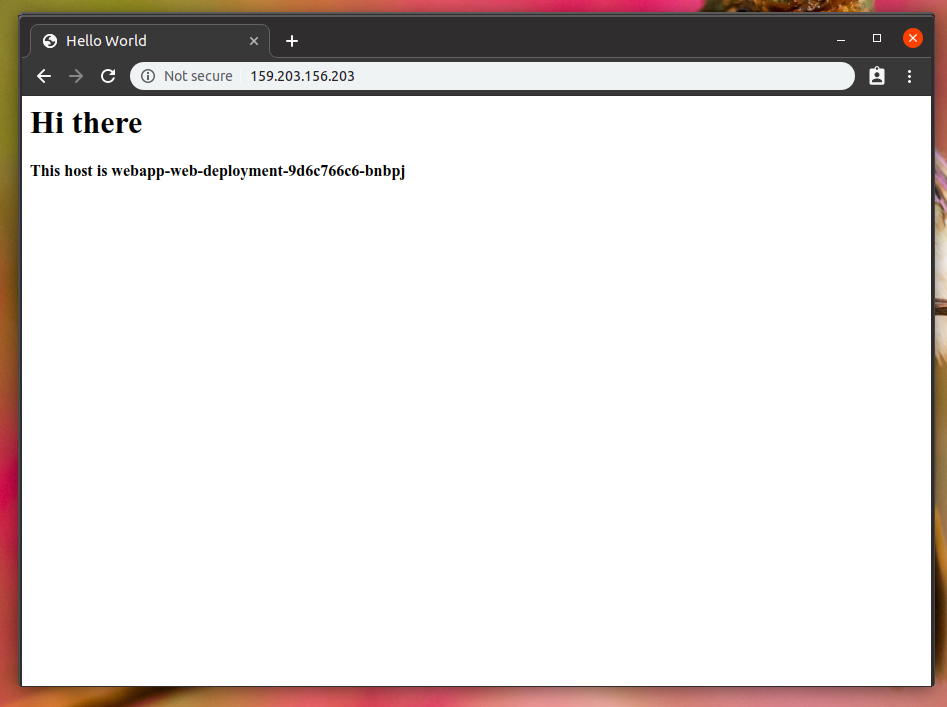

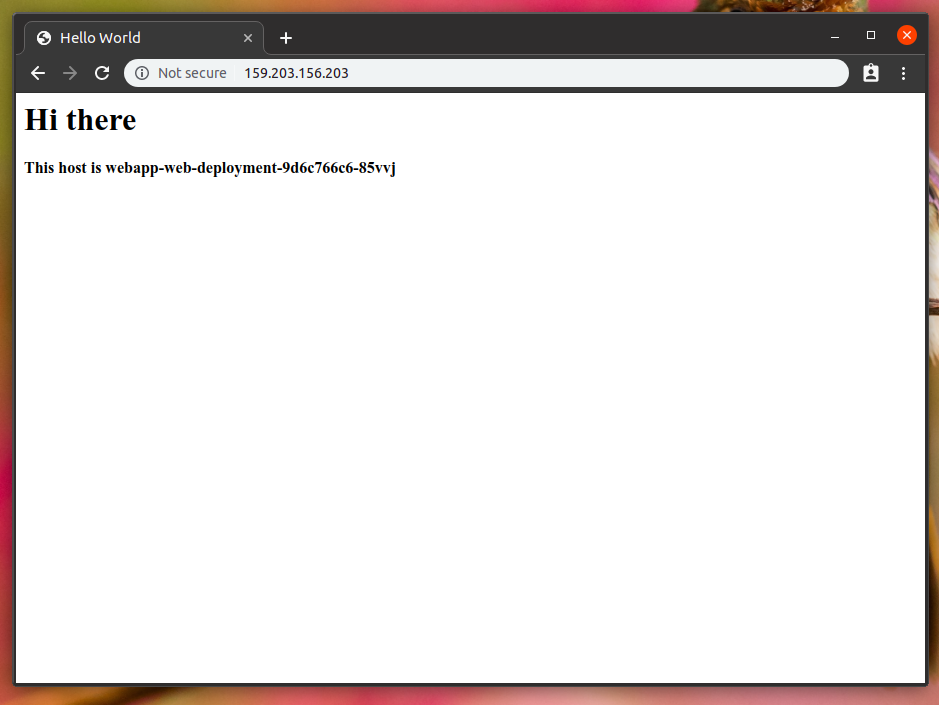

Route::post('attachments', function () { request()->validate([ 'attachment' => ['required', 'file'], ]); /** @var Attachment */ $attachment = Attachment::create([ 'record' => auth()->user(), ]); $media = $attachment->addMedia(request()->file('attachment')) ->toMediaCollection(); return [ 'attachable_sgid' => $attachment->richTextSgid(), 'image_url' => $media->getFullUrl(), ];})->name('attachments.store');Now, we're also returning a attachable_sgid field with the image_url. SGID is short for Signed Global IDs, which are essentually a string key that may represent any model (or object) in our application. You can think of it as a URL for your models. It's provided by the Globalid Laravel package, which the Rich Text Laravel package uses under the hood. That should be added to the Trix attachment in the front-end. Our final version there should be something like this:

@props(['id', 'value', 'name', 'disabled' => false]) <input type="hidden" id="{{ $id }}_input" name="{{ $name }}" value="{{ $value?->toTrixHtml() }}"/><trix-editor id="{{ $id }}" input="{{ $id }}_input" {{ $disabled ? 'disabled' : '' }} {{ $attributes->merge(['class' => 'trix-content rounded-md shadow-sm border-gray-300 focus:border-indigo-300 focus:ring focus:ring-indigo-200 focus:ring-opacity-50']) }} x-data="{ upload(event) { const data = new FormData(); data.append('attachment', event.attachment.file); window.axios.post('/attachments', data, { onUploadProgress(progressEvent) { event.attachment.setUploadProgress( progressEvent.loaded / progressEvent.total * 100 ); }, }).then(({ data }) => { event.attachment.setAttributes({ sgid: data.attachable_sgid, url: data.image_url, }); }); } }" x-on:trix-attachment-add="upload"></trix-editor>Now, let's add the HasAttachments trait to our Post model:

class Post extends Model{ // Other traits... use HasAttachments; // Other methods...}In our PostsController, let's make sure we call the syncAttachmentsMeta whenever a Post is created/updated, should be something like this:

class PostsController extends Controller{ // Other actions... public function store() { $post = auth()->user()->currentTeam->posts()->create( $this->postParams() + ['user_id' => auth()->id()] ); $post->syncAttachmentsMeta(); return redirect()->route('posts.show', $post); } public function update(Post $post) { $this->authorize('update', $post); tap($post) ->update($this->postParams()) ->syncAttachmentsMeta(); if (Request::wantsTurboStream() && ! Request::wasFromTurboNative()) { return Response::turboStream($post); } return redirect()->route('posts.show', $post); }}And with that, our app should be syncing our attachments from the rich text document to the Post model. What is nice about this that we can access our attachments from the Post model directly, without having to scan or even load the rich text document field, something like:

// returns a list of attachments without// having to go through the document...$post->attachmentsThat's it!

I hope you enjoyed this more "advanced" tutorial into the package. I actually have this running on my Turbo Demo App repository, you can see the Pull Request where I implemented this. It has a little bit more, the app there is using Stimulus instead of Alpine, but the idea is the same. And you can see the PR to the demo app from the previous article here.

For the next post in this Rich Text Laravel series I'm planning on adding server-side rendered syntax highlighting for the Trix code snippets in this application using Torchlight.

See you soon!

]]>class User extends Model{} class Team extends Model{} class Reviewer extends Model{ use SoftDeletes; public function reviewer() { return $this->morphTo(); } public function setReviewerAttribute($reviewer) { $this->reviewer()->associate($reviewer); }} class PullRequest extends Model{ public function reviewers() { return $this->hasMany(Reviewer::class); } public function syncReviewers(Collection $reviewers): void { DB::transaction(function () use ($reviewers) { $this->reviewers()->delete(); $this->reviewers()->saveMany($reviewers); }); }}Then, in the PullRequestReviewersController@update action, you would have something like:

class PullRequestReviewersController extends Controller{ public function store(PullRequest $pullRequest, Request $request) { $pullRequest->syncReviewers($this->reviewers($request)); } private function reviewers(Request $request) { // Returns new Reviewers based on the request... }}The PullRequestReviewersController::reviewers method will return a Collection of Reviewer instances. Building those new model instances can be tricky. Think about the form that is needed for this. The bare-minimum version of it would consist of a select field where you would list all Teams and Users as options. You could even group them in optgroup tags and label them accordingly:

<x-select name="reviewers[]" id="reviewers" multiple class="block mt-1 w-full"> <option value="" disabled selected>Select the reviewers...</option> <optgroup label="Teams"> @foreach ($teams as $team) <option value="{{ $team->id }}">{{ $team->name }}</option> @endforeach </optgroup> <optgroup label="Users"> @foreach ($users as $user) <option value="{{ $user->id }}">{{ $user->name }}</option> @endforeach </optgroup></x-select>Not so fast... teams and users may have colliding IDs. Both their Database tables have different sequences. Even if it didn't, let's say you're using UUIDs or something like that, how would you go about deciding which model the UUID belongs to when you're processing the request? All solutions I can think of would require some kind of ad-hoc differentiation between teams and users. Maybe you do something like <table>:<id>, so users options would render to user:1, user:2, etc., while teams options would render to something like team:1, team:2, etc.

Then, you'd have to encode that mapping logic to do the actual fetching. It's messy. There's a better way.

Globalids

The Globalid Laravel package solves this problem. This package is a port of a Rails gem called globalid. Instead of coming up with an ad-hoc solution that would probably be different every time we have a problem like this, we can solve it this way:

<x-select name="reviewers[]" id="reviewers" multiple class="block mt-1 w-full"> <option value="" disabled selected>Select the reviewers...</option> <optgroup label="Teams"> @foreach ($teams as $team) <option value="{{ $team->toGid()->toString() }}">{{ $team->name }}</option> @endforeach </optgroup> <optgroup label="Users"> @foreach ($users as $user) <option value="{{ $user->toGid()->toString() }}">{{ $user->name }}</option> @endforeach </optgroup></x-select>You would need to add the HasGlobalIdentification trait to both the Group and User models:

use Tonysm\Globalid\Models\HasGlobalIdentification; class User extends Model{ use HasGlobalIdentification;} class Team extends Model{ use HasGlobalIdentification;}The options' value fields would look something like this:

gid://laravel/App%5CModels%5CTeam/1gid://laravel/App%5CModels%5CUser/1The %5C here is the backslash (\) encoded to be URL-safe. This will work fine for a quick demo, but I'd highly recommend using something like Relation::enforceMorphMap() and avoiding using the model's FQCN for things like this. If you have a mapped morph, the package will use that. Something like this:

Relation::enforceMorphMap([ 'team' => Models\Team::class, 'user' => Models\User::class,]);And the options' values will then render like this:

gid://laravel/team/1gid://laravel/user/1Then, our backend can be simplified quite a lot, we can leverage the Globalids using the Locator Facade, like so:

use Tonysm\GlobalId\Facades\Locator; class PullRequestReviewersController extends Controller{ public function store(PullRequest $pullRequest, Request $request) { $pullRequest->syncReviewers($this->reviewers($request)); } private function reviewers(Request $request) { return Locator::locateMany(Arr::wrap($request->input('reviewers'))) ->map(fn ($reviewer) => Reviewer::make([ 'reviewer' => $reviewer, ]); }}That's nice, isn't it? The Locator::locateMany accepts a list of Globalids and will return its equivalent models. It's smart enough to only do a single query per model type to avoid unnecessary hops to the database and all. In this case, we used the Locator::locateMany but if we were only dealing with a single option, we could stick to the Locator::locate method, which would take a global ID and return the model instance based on that.

In our case, since we're only dealing with form payloads we could use the globalid path like that, but that's not really safe to use it as a route param, for instance. Instead of encoding the globalid to string, we could call the ->toParam() method, which would return a base64 URL-safe version of the globalid that you can use as a route param. Something like this:

Z2lkOi8vbGFyYXZlbC9ncm91cC8xThis could be useful if you were passing that as a route param like:

POST /pull-requests/123/reviewers/Z2lkOi8vbGFyYXZlbC9ncm91cC8xPreventing Tampering

Ok, all that is fine and all, but there's an issue with this implementation. It's not very secure. Users could tamper with the HTML form and start poking around with your payload. That's not cool. Would be cool if there was a way to prevent users from tampering with the globalids like that, right? Well, there is! It's called SignedGlobalids. The API is slightly the same, but instead of calling ->toGid() on the model, you would call ->toSgid(). Like following:

<x-select name="reviewers[]" id="reviewers" multiple class="block mt-1 w-full"> <option value="" disabled selected>Select the reviewers...</option> <optgroup label="Teams"> @foreach ($teams as $team) <option value="{{ $team->toSgid()->toString() }}">{{ $team->name }}</option> @endforeach </optgroup> <optgroup label="Users"> @foreach ($users as $user) <option value="{{ $user->toSgid()->toString() }}">{{ $user->name }}</option> @endforeach </optgroup></x-select>SignedGlobalids are cryptographically signed using a key derived from your app's APP_KEY, which means users cannot tamper with the form payload. Consuming this on your backend would then look like this:

use Tonysm\GlobalId\Facades\Locator; class PullRequestReviewersController extends Controller{ public function store(PullRequest $pullRequest, Request $request) { $pullRequest->syncReviewers($this->reviewers($request)); } private function reviewers(Request $request) { return Locator::locateManySigned(Arr::wrap($request->input('reviewers'))) ->map(fn ($reviewer) => Reviewer::make([ 'reviewer' => $reviewer, ]); }}The only difference is using locateManySigned instead of locateMany. Similarly, fetching a single resource would be locateSigned instead of the regular locate.

This can prevent users from tampering with the option values, but this does not prevent them from poking around in other places where you also use SignedGlobalids and find a signed option that they want to send in another form. If in your application you had another form that would also show them polymorphic options like that but to other models, for instance. They could then pick those options from the other form and use them on the one for reviewers. Since the options would be signed, your code would be tricked to accept it. That's not cool.

There are actually two ways you could go about it. When locating, you could tell the Locator that you're only interested in SignedGlobalids of the User model, for instance:

private function reviewers(Request $request){ return Locator::locateManySigned(Arr::wrap($request->input('reviewers')), [ 'only' => User::class, ]) ->map(fn ($reviewer) => Reviewer::make([ 'reviewer' => $reviewer, ]);}That would only locate SignedGlobalids for the User model, ignoring every other non-User SignedGlobalId you may have. You can also define purposes for SignedGlobaids. This way, you can prevent users from reusing options just by copying and pasting the values from one form to a totally different one. For instance, our reviewers form could render the options passing the for option to the toSgid():

<x-select name="reviewers[]" id="reviewers" multiple class="block mt-1 w-full"> <option value="" disabled selected>Select the reviewers...</option> <optgroup label="Teams"> @foreach ($teams as $team) <option value="{{ $team->toSgid(['for' => 'reviewers-form'])->toString() }}">{{ $team->name }}</option> @endforeach </optgroup> <optgroup label="Users"> @foreach ($users as $user) <option value="{{ $user->toSgid(['for' => 'reviewers-form'])->toString() }}">{{ $user->name }}</option> @endforeach </optgroup></x-select>Then, in our backend we would also have to specify the same purpose when locating the models, like this:

private function reviewers(Request $request){ return Locator::locateManySigned(Arr::wrap($request->input('reviewers')), [ 'for' => 'reviewers-form', ]) ->map(fn ($reviewer) => Reviewer::make([ 'reviewer' => $reviewer, ]);}If the purpose encoded and signed on the SignedGlobalid doesn't match with the purpose you specify when locating, it wouldn't work.

Alternatively, you could also specify how long this SignedGlobalid will be valid, for instance, which could be useful if you're generating a public access link for some resource but you don't want it to be available forever, which helps preventing data from leaking out of your app in some cases. Read more about SignedGlobalids here.

Globalids are very useful in all sorts of situations where you want to use polymorphism. I'm using that in the Rich Text Laravel package, for instance, to store references to models when you use them as attachments. Instead of serializing the model, we can store the URI to that model and use the Locator to find it for us when it's time to render the document again.

]]>If you prefer video, I've recording a tutorial based on this post:

So, let's dive right in.

The Demo App

Before we start talking about Trix and how the package integrates Laravel with it, let's create a basic journaling application, where users can keep track of their thoughts (or whatever they want, really).

To create the Laravel application, let's use Laravel's installer:

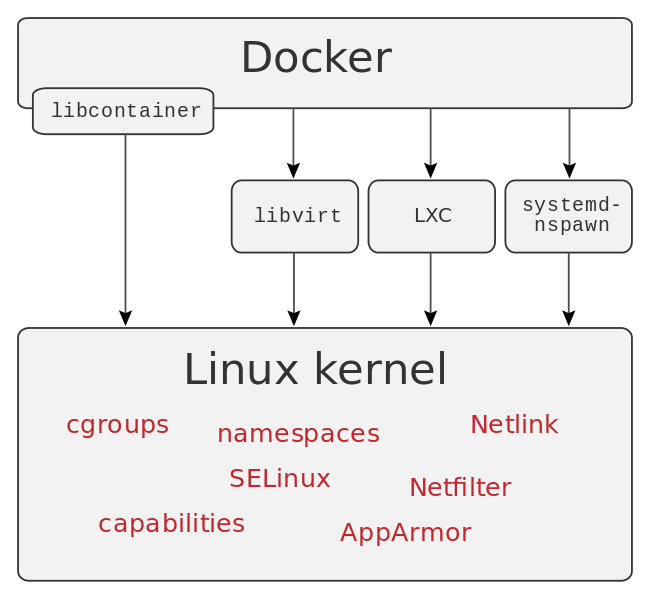

laravel new larajournalI'm gonna be using Laravel Sail, so let's publish the docker-compose.yml file:

php artisan sail:install --with=mysqlYou will need Docker and Docker Compose installed, so make sure you follow their instructions. Also, feel free to use php artisan serve or Laravel Valet, if you want to. It doesn't really matter for what we're trying to do here.

Let's start the services:

sail up -dWe should have both our database and the web server running. You can verify that by visiting http://localhost on your browser, or by listing the ps command, where all statuses should be Up:

sail psLet's install the Breeze scaffolding so we can have basic authentication and a protected area scaffold for us:

composer require laravel/breeze --devphp artisan breeze:installnpm install && npm run devNow, we'll create the model with migration and factory:

php artisan make:model Post -mfLet's add a title and acontentfield to thecreate_posts_table` migration we have just created:

Schema::create('posts', function (Blueprint $table) { $table->id(); $table->foreignId('user_id')->constrained(); $table->string('title'); $table->longText('content'); $table->timestamps();});We also added the Foreign Key to the users table so we can isolate each user's posts. Let's update the User model to add the posts relationship:

class User extends Authenticatable{ use HasApiTokens, HasFactory, Notifiable; // ... public function posts() { return $this->hasMany(Post::class); }}Now, lets edit the DatabaseSeeder to create a default user and some posts as well as some random posts so we can just check that we don't see other user's posts:

User::factory()->has(Post::factory(3))->create([ 'name' => 'Test User', 'email' => 'user@example.com',]); User::factory(5)->has(Post::factory(3))->create();Now, let's edit the PostFactory so we can instruct it how to create new fake posts:

<?php namespace Database\Factories; use App\Models\Post;use Illuminate\Database\Eloquent\Factories\Factory; class PostFactory extends Factory{ protected $model = Post::class; public function definition() { return [ 'title' => $this->faker->sentence(), 'content' => $this->faker->text(), ]; }}And edit the Post model to remove the mass-assignment protection:

class Post extends Model{ use HasFactory; protected $guarded = [];}Now, we can migrate and seed our database:

sail artisan migrate --seedNow, try to login with the user we created in our seeder. You should see the basic dashboard:

Now, let's pass down the user's post in the dashboard route at the web.php routes file:

Route::get('/dashboard', function () { return view('dashboard', [ 'posts' => auth()->user()->posts()->latest()->get(), ]);})->middleware(['auth'])->name('dashboard');Now, make use of the posts variable in the dashboard.blade.php Blade file:

<x-app-layout> <x-slot name="header"> <div class="flex items-center justify-between"> <h2 class="text-xl font-semibold leading-tight text-gray-800"> {{ __('Dashboard') }} </h2> <div> <a href="{{ route('posts.create') }}" class="px-4 py-2 font-semibold text-indigo-400 border border-indigo-300 rounded-lg shadow-sm hover:shadow">New Post</a> </div> </div> </x-slot> <div class="py-12"> <div class="mx-auto max-w-7xl sm:px-6 lg:px-8"> <div id="posts" class="space-y-5"> @forelse ($posts as $post) <x-posts.card :post="$post" /> @empty <x-posts.empty-list /> @endforelse </div> </div> </div></x-app-layout>This view makes use of two components, which we'll add now. First, add the resources/views/components/posts/card.blade.php:

<div class="bg-white border border-transparent rounded hover:border-gray-100 hover:shadow"> <a href="{{ route('posts.show', $post) }}" class="block w-full p-8"> <div class="pb-6 text-xl font-semibold border-b"> {{ $post->title }} </div> <div class="mt-4"> {{ Str::limit($post->content, 300) }} </div> </a></div>This card makes use of a posts.show named route and the dashboard.blade.php file makes use of a posts.create named route, which doesn't yet exist. Let's add that. First, create the PostsController:

php artisan make:controller PostsControllerThen, add this to the web.php routes file:

Route::resource('posts', Controllers\PostsController::class);We're adding a resource route because we'll make use of other resource actions as well.

There's still one component missing from our dashboard.blade.php view, the x-posts.empty. This component we'll have an empty message to show when there are no posts for the current user. Create the empty-list.blade.php file at resources/views/components/posts/:

<div class="p-3 text-center"> <p>There are no posts yet.</p></div>Now, you should be able to see the latest 3 fake posts for the current user in the dashboard.

So far, so good. However, if we click in the "New Post" link, nothing happens yet. Let's add the create action to the PostsController:

/** * Show the form for creating a new resource. * * @return \Illuminate\Http\Response */public function create(){ return view('posts.create', [ 'post' => auth()->user()->posts()->make(), ]);}This makes use of a posts.create view which doesn't yet exist. Create a resources/views/posts/create.blade.php file with the following content:

<x-app-layout> <x-slot name="header"> <h2 class="text-xl font-semibold leading-tight text-gray-800"> <a href="{{ route('dashboard')}} ">Dashboard</a> / {{ __('New Post') }} </h2> </x-slot> <div class="py-12"> <div class="mx-auto max-w-7xl sm:px-6 lg:px-8"> <div class="p-8 bg-white rounded-lg"> <div id="create_post"> <x-posts.form :post="$post" /> </div> </div> </div> </div></x-app-layout>This makes use of a x-posts.form Blade component which we can create the resources/views/components/posts/form.blade.php file with the content:

<form method="POST" action="{{ route('posts.store') }}"> @csrf <!-- Post Title --> <div> <x-label for="title" :value="__('Title')" /> <x-input id="title" class="block w-full mt-1" placeholder="Type the title..." type="text" name="title" :value="old('title', $post->title)" required autofocus /> <x-input-validation for="title" /> </div> <!-- Post Content --> <div class="mt-4"> <x-label for="content" :value="__('Content')" class="mb-1" /> <x-forms.richtext id="content" name="content" :value="$post->content" /> <x-input-validation for="content" /> </div> <div class="flex items-center justify-between mt-4"> <div> <a href="{{ route('dashboard') }}">Cancel</a> </div> <div class="flex items-center justify-end"> <x-button class="ml-3"> {{ __('Save') }} </x-button> </div> </div></form>Almost all components used here comes with Breeze, except for the x-input-validation and the x-richtext components, which we'll add now. Create a resources/views/components/input-validatino.blade.php file with the contents:

@props('for') @if ($errors->has($for)) <p class="mt-1 text-sm text-red-800">{{ $errors->first($for) }}</p>@endifFor the richtext one, however, we're making it a simple textarea for now. Create the resources/components/forms/richtext.blade.php file with the content:

@props(['disabled' => false, 'value' => '']) <textarea {{ $disabled ? 'disabled' : '' }} {!! $attributes->merge(['class' => 'rounded-md shadow-sm border-gray-300 w-full focus:border-indigo-300 focus:ring focus:ring-indigo-200 focus:ring-opacity-50']) !!}>{{ $value }}</textarea>Ok, now if you click in the "New Posts" link, we should see the create posts form. To be able to create a post, let's add the store action to the PostsController:

/** * Store a newly created resource in storage. * * @param \Illuminate\Http\Request $request * @return \Illuminate\Http\Response */public function store(Request $request){ $request->user()->posts()->create($request->validate([ 'title' => ['required'], 'content' => ['required'], ])); return redirect()->route('dashboard');}Alright, if you try to create a post, you will get redirected back to the dashboard route and you should see the new post at the top. Nice!

Now, let's implement the posts.show route. So, add a show action the PostsController:

/** * Display the specified resource. * * @param \App\Models\Post $post * @return \Illuminate\Http\Response */public function show(Post $post){ return view('posts.show', [ 'post' => $post, ]);}And create the view file at resources/views/posts/show.blade.php with the content:

<x-app-layout> <x-slot name="header"> <h2 class="text-xl font-semibold leading-tight text-gray-800"> <a href="{{ route('dashboard') }}">Dashboard</a> / Post #{{ $post->id }} </h2> </x-slot> <div class="py-12"> <div class="mx-auto max-w-7xl sm:px-6 lg:px-8"> <div class="p-8 bg-white rounded-lg"> <div class="relative"> <div class="pb-6 text-xl font-semibold border-b"> {{ $post->title }} </div> <div class="absolute top-0 right-0" x-data x-on:click.away="$refs.details.removeAttribute('open')"> <details class="relative" x-ref="details"> <summary class="list-none" x-ref="summary"> <button type="button" x-on:click="$refs.summary.click()" class="text-gray-400 hover:text-gray-500"> <x-icon type="dots-circle" /> </button> </summary> <div class="absolute right-0 top-6"> <ul class="w-40 px-4 py-2 bg-white border divide-y rounded rounded-rt-0"> <li class="py-2"><a class="block w-full text-left" href="{{ route('posts.edit', $post) }}">Edit</a></li> <li class="py-2"><button class="block w-full text-left" form="delete_post">Delete</button></li> </ul> <form id="delete_post" x-on:submit="if (! confirm('Are you sure you want to delete this post?')) { return false; }" action="{{ route('posts.destroy', $post) }}" method="POST"> @csrf @method('DELETE') </form> </div> </details> </div> </div> <div class="mt-4"> {{ $post->content }} </div> </div> </div> </div></x-app-layout>This view uses an x-icon component, which uses a Heroicons SVG. You can create with this:

@props(['type']) <svg class="w-6 h-6" fill="none" stroke="currentColor" viewBox="0 0 24 24" xmlns="http://www.w3.org/2000/svg"> @if ($type === 'dots-circle') <path stroke-linecap="round" stroke-linejoin="round" stroke-width="2" d="M8 12h.01M12 12h.01M16 12h.01M21 12a9 9 0 11-18 0 9 9 0 0118 0z"></path> @endif</svg>With that, once you click in a post, you will see the entire post content. There's a dropdown here where you can see the "Edit" and "Delete" actions. Let's add the "destroy" action to the PostsController:

/** * Remove the specified resource from storage. * * @param \App\Models\Post $post * @return \Illuminate\Http\Response */public function destroy(Post $post){ $post->delete(); return redirect()->route('dashboard');}This should make the delete action work. Now, let's create the edit action so we can edit posts. Add the edit and update actions to the PostsController:

/** * Show the form for editing the specified resource. * * @param \App\Models\Post $post * @return \Illuminate\Http\Response */public function edit(Post $post){ return view('posts.edit', [ 'post' => $post, ]);} /** * Update the specified resource in storage. * * @param \Illuminate\Http\Request $request * @param \App\Models\Post $post * @return \Illuminate\Http\Response */public function update(Request $request, Post $post){ $post->update($request->validate([ 'title' => ['required', 'min:3', 'max:255'], 'content' => ['required'], ])); return redirect()->route('posts.show', $post);}Next, add the edit.blade.php view at resources/views/posts/edit.blade.php with the contents:

<x-app-layout> <x-slot name="header"> <h2 class="text-xl font-semibold leading-tight text-gray-800"> <a href="{{ route('dashboard')}} ">Dashboard</a> / {{ __('Edit Post #:id', ['id' => $post->id]) }} </h2> </x-slot> <div class="py-12"> <div class="mx-auto max-w-7xl sm:px-6 lg:px-8"> <div class="p-8 bg-white rounded-lg"> <div id="edit_post"> <x-posts.form :post="$post" /> </div> </div> </div> </div></x-app-layout>This will make use of the same form used to create posts, so we need to make some tweaks to it:

<form method="POST" action="{{ $post->exists ? route('posts.update', $post) : route('posts.store') }}"> @csrf @if ($post->exists) @method('PUT') @endif <!-- Post Title --> <div> <x-label for="title" :value="__('Title')" /> <x-input id="title" class="block w-full mt-1" placeholder="Type the title..." type="text" name="title" :value="old('title', $post->title)" required autofocus /> <x-input-validation for="title" /> </div> <!-- Post Content --> <div class="mt-4"> <x-label for="content" :value="__('Content')" class="mb-1" /> <x-forms.richtext id="content" name="content" :value="$post->content" /> <x-input-validation for="content" /> </div> <div class="flex items-center justify-between mt-4"> <div> @if ($post->exists) <a href="{{ route('posts.show', $post) }}">Cancel</a> @else <a href="{{ route('dashboard') }}">Cancel</a> @endif </div> <div class="flex items-center justify-end"> <x-button class="ml-3"> {{ __('Save') }} </x-button> </div> </div></form>With these changes, the form will post to the update action if the post model already exists or to the create action if it's a new instance. Similarly, the cancel link will lead the user to dashboard if it's a new instance or to the posts.show route if the post already exists.

That's it for the first part of this tutorial. We now have a fully functioning basic application where users can create keep track of their thoughts. We're still using just a simple textarea field. It's time to install Trix and the Rich Text Laravel package.

Use the Rich Text Laravel Package

To install the package, we can run:

composer require tonysm/rich-text-laravelNext, run the package's install command:

php artisan richtext:installThis will do:

- Publish the

create_rich_texts_tablemigration - Add

trixto thepackage.jsonfile as a dev dependency - Publish the Trix bootstrap file to

resources/js/libs/trix.js

Let's import that file in the resources/js/app.js file:

require('./bootstrap.js'); require('alpinejs'); require('./libs/trix.js');Then, add the Trix styles to the resources/css/app.css file:

/** These are specific for the tag that will be added to the rich text content */.trix-content .attachment-gallery > .attachment,.trix-content .attachment-gallery > rich-text-attachment { flex: 1 0 33%; padding: 0 0.5em; max-width: 33%;} .trix-content .attachment-gallery.attachment-gallery--2 > .attachment,.trix-content .attachment-gallery.attachment-gallery--2 > rich-text-attachment,.trix-content .attachment-gallery.attachment-gallery--4 > .attachment,.trix-content .attachment-gallery.attachment-gallery--4 > rich-text-attachment { flex-basis: 50%; max-width: 50%;} .trix-content rich-text-attachment .attachment { padding: 0 !important; max-width: 100% !important;} /** These are TailwindCSS specific tweaks */.trix-content { @apply w-full;} .trix-content h1 { font-size: 1.25rem !important; line-height: 1.25rem !important; @apply leading-5 font-semibold mb-4;} .trix-content a:not(.no-underline) { @apply underline;} .trix-content ul { list-style-type: disc; padding-left: 2.5rem;} .trix-content ol { list-style-type: decimal; padding-left: 2.5rem;} .trix-content img { margin: 0 auto;}Let's install Trix and compile the assets:

npm install && npm run devBy default, the Rich Text Laravel package ships with a suggested database structure. All Rich Text contents will live in the rich_texts table. Now, we need to migrate our content field from the posts table and create rich_text entries for each existing post. If you're starting a new application with the package, you can skip this part. I just wanted to demo how you could do a simple migration.

Create the migration:

php artisan make:migration migrate_posts_content_field_to_the_rich_text_tableChange the up method of the newly created migration to add the following content:

foreach (DB::table('posts')->oldest('id')->cursor() as $post){ DB::table('rich_texts')->insert([ 'field' => 'content', 'body' => '<div>' . $post->content . '</div>', 'record_type' => (new Post)->getMorphClass(), 'record_id' => $post->id, 'created_at' => $post->created_at, 'updated_at' => $post->updated_at, ]);} Schema::table('posts', function (Blueprint $table) { $table->dropColumn('content');});Since the RichText model is a polymorphic one, let's enforce the morphmap so we avoid storing the class's FQCN in the database by adding the following line in the boot method of theAppServiceProvider:

Relation::enforceMorphMap([ 'post' => \App\Models\Post::class,]);Now, let's add the HasRichText trait to the Post model and define our content field as a Rich Text field:

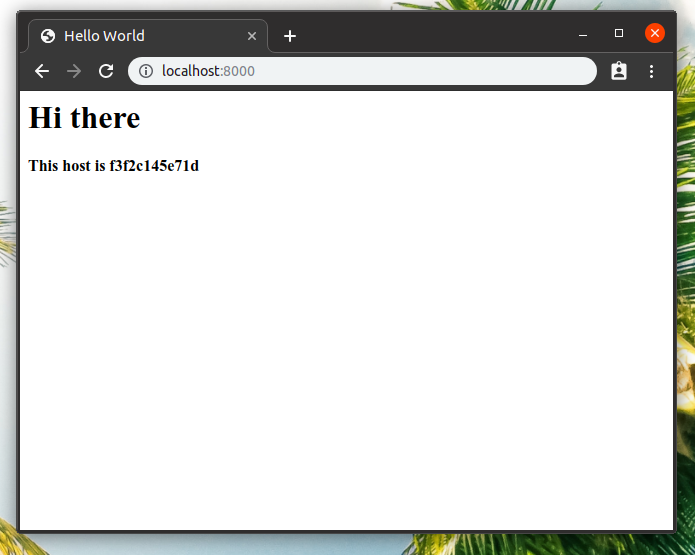

<?php namespace App\Models; use Illuminate\Database\Eloquent\Factories\HasFactory;use Illuminate\Database\Eloquent\Model;use Tonysm\RichTextLaravel\Models\Traits\HasRichText; class Post extends Model{ use HasFactory; use HasRichText; protected $guarded = []; protected $richTextFields = [ 'content', ];}Right now, the application is not working as you would expect. If you try to open it in the browser, you will see that it's not really behaving properly. First, we can see the <div> tag in the output both in the dashboard and in the posts.show routes. Let's fix the dashboard route first.

This will be a good opportunity to show a feature of the package: it can convert any Rich Text content to plain text! To achieve that, change the card component to be the following:

-{{ Str::limit($post->content, 300) }}+{{ Str::limit($post->content->toPlainText(), 300) }}Before, our content field was just a simple text field. Now, we get an instance of the RichText model, which forwards calls to the underlying Content class. The Content class has some really cool methods, such as the toPlainText() we see here.

With the card component taken care of, let's see what we can do for the posts.show route. It's still displaying the HTML tags. That's because Laravel's Blade will escape any HTML content when you're echoing out using double curly braces {{ }}, and that's not what we want. We need to let the HTML render on the page so any other tag such as h1s or ul created by Trix also display correctly.

Achieving that is relatively simple: use {!! !!} instead of {{ }}. However, there's a serious gotcha here that allows malicious users to exploit an XSS attack. We'll talk more about that in the next section. For now, let's make the naive change:

-{{ $post->content }}+{!! $post->content !!}And voilà! The HTML tags are no longer being escaped and the HTML content is rendering again. Cool.

One last piece before we jump to the next section. We are still using a textarea in our form. Let's replace it with the Trix editor. Trix is already installed and assets should have been compiled earlier, so I think we're ready. Change the contents of the richtext form component to this:

@props(['id', 'value', 'name', 'disabled' => false]) <input type="hidden" id="{{ $id }}_input" name="{{ $name }}" value="{{ $value?->toTrixHtml() }}" /><trix-editor id="{{ $id }}" input="{{ $id }}_input" {{ $disabled ? 'disabled' : '' }} {!! $attributes->merge(['class' => 'trix-content rounded-md shadow-sm border-gray-300 focus:border-indigo-300 focus:ring focus:ring-indigo-200 focus:ring-opacity-50']) !!}></trix-editor>Open up the browser again and you should see the Trix editor! Ain't this cool? Make some changes to the content and submit the form. Everything should be working as before.

There are two HTML elements here to make Trix work as we want: the input and the trix-editor elements. The input is hidden, so users don't actually see it, but this is the input that will be submitted by the browser containing the latest state of the HTML content for our field. We feed it using the toTrixHtml() method that we get from our Content class. Trix will take care of keeping the state from the editor in sync with the value of the input field, so you don't have to worry about that.

Now, let's handle the XSS attack vector we enabled by outputting non-escaped HTML content.

HTML Sanitization

Before we fix the issue, let's exploit it ourselves. Go to your browser, open the create posts form, open up your DevTools, find and delete the trix-editor element and change the hidden input type to text so the input is displayed. Now, replace its value with a script tag, like so:

<script>alert('hey, there');</script>Submit the form and got to that post's show page. Oh, noes. The JavaScript was executed by the browser. We don't want that, right? We can fix it with a technique called HTML Sanitization. We don't actually need to allow the entire HTML spec to be rendered. We only need a subset of it so our rich text content is displayed correctly. So, for one, we don't need to render any <script> tag. We cannot use something like PHP's strip_tags function, because that would get rid of all tags, so our <b> or <a> tags would be gone. We could maybe pass it a list of allowed HTML tags, but we could still be exploited using some HTML attributes.

Instead, let's use a package that will handle most of the work for us. That's mews/purifier:

composer require mews/purifierThe package gives us a clean() helper function that we can use to display sanitized. Let's change our posts/show.blade.php view to use that function:

-{!! $post->content !!}+{!! clean($post->content) !!}If you check that out in the browser you will notice that you no longer see the alert! So our problem was fixed. We still need to make some tweaks to the Sanitizer's default configs, but for now, that will do. Try out some rich text tweaks and see if they are displayed correctly. Most of them should.

Before we change the configs, let's explore one side of Trix that's not currently working: image uploads.

Simple Image Uploading

If you try to attach an image to Trix, it's not working out of the box. The image kinda shows up, but in a "pending" state, which means that this change was actually not made to the Trix document. See, Trix doesn't know how our application handles image upload, so it's up to us help it.

Let's use Alpine.js, which already comes installed with Breeze, to implement image uploading. First, let's cover the client-side of image uploading. Open up the richtext.blade.php component, and initialize Alpine in the trix-editor element:

<trix-editor x-data="{ // ... }"></trix-editor>Cool. Trix will dispatch a custom event called trix-attachment-add whenever you attempt to upload an attachment. We need to listen to that event and do the upload. The event will contain the file we have to upload as well as the Trix.Attachment instance object which we'll use later to set some attributes on it so we can tell Trix the attachment is no longer pending so it can update the Document state:

@props(['id', 'value', 'name', 'disabled' => false]) <input type="hidden" id="{{ $id }}_input" name="{{ $name }}" value="{{ $value?->toTrixHtml() }}"/><trix-editor id="{{ $id }}" input="{{ $id }}_input" {{ $disabled ? 'disabled' : '' }} {!! $attributes->merge(['class' => 'trix-content rounded-md shadow-sm border-gray-300 focus:border-indigo-300 focus:ring focus:ring-indigo-200 focus:ring-opacity-50']) !!} x-data="{ upload(event) { const data = new FormData(); data.append('attachment', event.attachment.file); window.axios.post('/attachments', data, { onUploadProgress(progressEvent) { event.attachment.setUploadProgress( progressEvent.loaded / progressEvent.total * 100 ); }, }).then(({ data }) => { event.attachment.setAttributes({ url: data.image_url, }); }); } }" x-on:trix-attachment-add="upload"></trix-editor>That's cool. We're sending a request to POST /attachments with an attachment field and we expect a image_url in the response data. Let's implement the server-side for that. We'll simply add a route Closure to our web.php routes file for now:

Route::post('/attachments', function () { request()->validate([ 'attachment' => ['required', 'file'], ]); $path = request()->file('attachment')->store('trix-attachments', 'public'); return [ 'image_url' => Storage::disk('public')->url($path), ];})->middleware(['auth'])->name('attachments.store');If you try to attach an image now, uploading should just work! But there should be a problem when you visit that post's show page: the image is broken. Let's publish the config so we can tweak it a little bit:

php artisan vendor:publish --provider="Mews\Purifier\PurifierServiceProvider"Now, open up the /config/purifier.php and replace its contents:

<?php return [ 'encoding' => 'UTF-8', 'finalize' => true, 'ignoreNonStrings' => false, 'cachePath' => storage_path('app/purifier'), 'cacheFileMode' => 0755, 'settings' => [ 'default' => [ 'HTML.Doctype' => 'HTML 4.01 Transitional', 'HTML.Allowed' => 'rich-text-attachment[sgid|content-type|url|href|filename|filesize|height|width|previewable|presentation|caption|data-trix-attachment|data-trix-attributes],div,b,strong,i,em,u,a[href|title|data-turbo-frame],ul,ol,li,p[style],br,span[style],img[width|height|alt|src],del,h1,blockquote,figure[data-trix-attributes|data-trix-attachment],figcaption,pre,*[class]', 'CSS.AllowedProperties' => 'font,font-size,font-weight,font-style,font-family,text-decoration,padding-left,color,background-color,text-align', 'AutoFormat.AutoParagraph' => true, 'AutoFormat.RemoveEmpty' => true, ], 'test' => [ 'Attr.EnableID' => 'true', ], "youtube" => [ "HTML.SafeIframe" => 'true', "URI.SafeIframeRegexp" => "%^(http://|https://|//)(www.youtube.com/embed/|player.vimeo.com/video/)%", ], 'custom_definition' => [ 'id' => 'html5-definitions', 'rev' => 1, 'debug' => false, 'elements' => [ // http://developers.whatwg.org/sections.html ['section', 'Block', 'Flow', 'Common'], ['nav', 'Block', 'Flow', 'Common'], ['article', 'Block', 'Flow', 'Common'], ['aside', 'Block', 'Flow', 'Common'], ['header', 'Block', 'Flow', 'Common'], ['footer', 'Block', 'Flow', 'Common'], // Content model actually excludes several tags, not modelled here ['address', 'Block', 'Flow', 'Common'], ['hgroup', 'Block', 'Required: h1 | h2 | h3 | h4 | h5 | h6', 'Common'], // http://developers.whatwg.org/grouping-content.html ['figure', 'Block', 'Optional: (figcaption, Flow) | (Flow, figcaption) | Flow', 'Common'], ['figcaption', 'Inline', 'Flow', 'Common'], // http://developers.whatwg.org/the-video-element.html#the-video-element ['video', 'Block', 'Optional: (source, Flow) | (Flow, source) | Flow', 'Common', [ 'src' => 'URI', 'type' => 'Text', 'width' => 'Length', 'height' => 'Length', 'poster' => 'URI', 'preload' => 'Enum#auto,metadata,none', 'controls' => 'Bool', ]], ['source', 'Block', 'Flow', 'Common', [ 'src' => 'URI', 'type' => 'Text', ]], // http://developers.whatwg.org/text-level-semantics.html ['s', 'Inline', 'Inline', 'Common'], ['var', 'Inline', 'Inline', 'Common'], ['sub', 'Inline', 'Inline', 'Common'], ['sup', 'Inline', 'Inline', 'Common'], ['mark', 'Inline', 'Inline', 'Common'], ['wbr', 'Inline', 'Empty', 'Core'], // http://developers.whatwg.org/edits.html ['ins', 'Block', 'Flow', 'Common', ['cite' => 'URI', 'datetime' => 'CDATA']], ['del', 'Block', 'Flow', 'Common', ['cite' => 'URI', 'datetime' => 'CDATA']], // RichTextLaravel ['rich-text-attachment', 'Block', 'Flow', 'Common'], ], 'attributes' => [ ['iframe', 'allowfullscreen', 'Bool'], ['table', 'height', 'Text'], ['td', 'border', 'Text'], ['th', 'border', 'Text'], ['tr', 'width', 'Text'], ['tr', 'height', 'Text'], ['tr', 'border', 'Text'], ], ], 'custom_attributes' => [ ['a', 'target', 'Enum#_blank,_self,_target,_top'], // RichTextLaravel ['a', 'data-turbo-frame', 'Text'], ['img', 'class', new HTMLPurifier_AttrDef_Text()], ['rich-text-attachment', 'sgid', new HTMLPurifier_AttrDef_Text], ['rich-text-attachment', 'content-type', new HTMLPurifier_AttrDef_Text], ['rich-text-attachment', 'url', new HTMLPurifier_AttrDef_Text], ['rich-text-attachment', 'href', new HTMLPurifier_AttrDef_Text], ['rich-text-attachment', 'filename', new HTMLPurifier_AttrDef_Text], ['rich-text-attachment', 'filesize', new HTMLPurifier_AttrDef_Text], ['rich-text-attachment', 'height', new HTMLPurifier_AttrDef_Text], ['rich-text-attachment', 'width', new HTMLPurifier_AttrDef_Text], ['rich-text-attachment', 'previewable', new HTMLPurifier_AttrDef_Text], ['rich-text-attachment', 'presentation', new HTMLPurifier_AttrDef_Text], ['rich-text-attachment', 'caption', new HTMLPurifier_AttrDef_Text], ['rich-text-attachment', 'data-trix-attachment', new HTMLPurifier_AttrDef_Text], ['rich-text-attachment', 'data-trix-attributes', new HTMLPurifier_AttrDef_Text], ['figure', 'data-trix-attachment', new HTMLPurifier_AttrDef_Text], ['figure', 'data-trix-attributes', new HTMLPurifier_AttrDef_Text], ], 'custom_elements' => [ ['u', 'Inline', 'Inline', 'Common'], // RichTextLaravel ['rich-text-attachment', 'Block', 'Flow', 'Common'], ], ], ];If you refresh the browser now you will see that our img tag is now wrapped with a figure tag. But it's still not working, right?

That's because we need to symlink the storage folder to our public/ directory locally so images uploaded to the public disk using the local driver are displayed correctly:

# If you're using Sail:sail artisan storage:link # Otherwise, use this:php artisan storage:linkThat should fix it! Great.

This will do it for an introductory guide, I think. I plan to write more advanced guides like User mentions and advanced image uploading using Spatie's Media Library package. I'll see you on the next post.

]]>Some cool design patterns I've learned recently:

— Tony Messias (@tonysmdev) May 8, 2021

- Method Object

- Double Dispatch (aka. Duet or Pas de Deux)

- Pluggable Behavior

🤓

And Freek Van der Herten mentioned that I could cover them as blogposts. Here's is the first one. Well, technically, the second one. See, the first pattern I mentioned there called "Method Object" was already covered here in this blog in the post titled "When Objects Are Not Enough". Same idea. Which is cool. I've updated the post to add this reference.

Now to Double Dispatch!

Introduction

The computation of a method call is only dependent on the object receiving the method call. Most of the time that's enough. However, sometimes we need the computation to also depend on the argument being passed to the method call.

Think you have two hierarchies of objects interacting with each other and the computation of these interactions depends on both objects, not only in one of them. Maybe some examples will make this clearer.

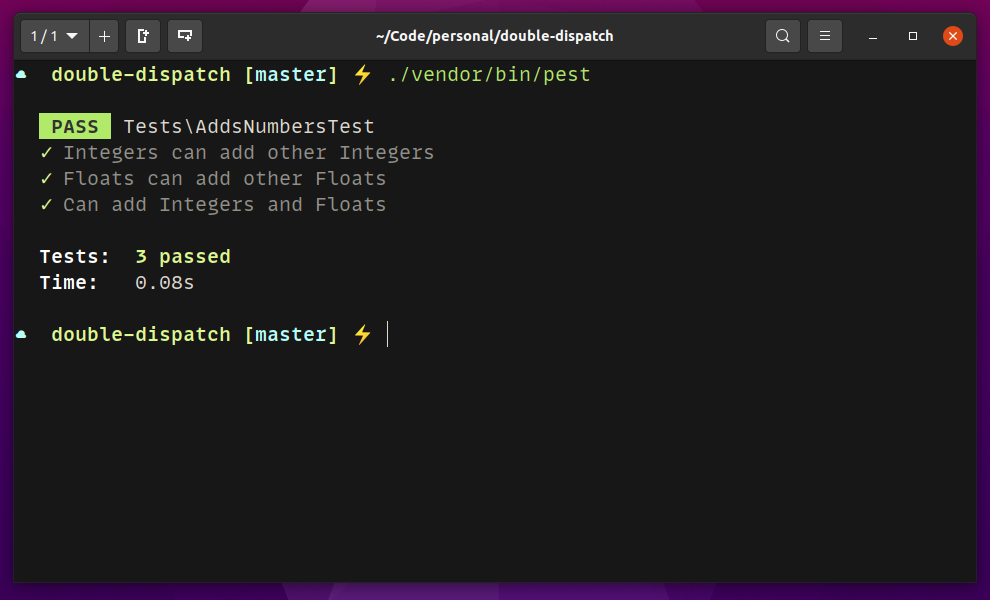

We're going to TDD our way through this pattern using Pest. Feel free to use whatever you want. All classes are in the same file as the test for the sake of the demo.

Example: Adding Integers and Floats

Let's get to the first example: adding numbers. For this example, let's imagine we are building the base classes for numbers in a language and that our language is not able to add primitives of the different types.

We'll start with the use case of adding only integers:

declare(strict_types = 1); test('adds integers', function () { $first = new IntegerNumber(40); $second = new IntegerNumber(2); $this->assertSame(42, $first->add($second)->value);});Let's add IntegerNumber class to the top of the test file to make the test pass (right below the declare() call):

class IntegerNumber{ public function __construct(public int $value) {} public function add($number) { return new IntegerNumber($this->value + $number->value); }}That works. Notice that we added a declare(strict_types = 1); to the PHP file. I did this because PHP is very smart and is able to sum integers and floats, so I wanted to force us to manually cast the values for the purpose of this example.

Let's add test for adding floats:

test('adds floats', function () { $first = new FloatNumber(40.0); $second = new FloatNumber(2.0); $this->assertSame(42.0, $first->add($second)->value);});And, to make it pass, let's add the FloatNumber class:

class FloatNumber{ public function __construct(public float $value) {} public function add($number) { return new FloatNumber($this->value + $number->value); }}Our tests should be green. So far, so good. Let's add our first cross-addition: adding integers and floats.

test('adds integers and floats', function () { $first = new IntegerNumber(40); $second = new FloatNumber(2.0); $this->assertSame(42, $first->add($second)->value); $this->assertSame(42.0, $second->add($first)->value);});OK, how can we get that one working? The answer is: Double Dispatch. The pattern states the following:

Send a message to the argument. Append the class name of the receiver to the selector. Pass the receiver as an argument. (Kent Beck in "Smalltalk Best Practice Patterns", pg. 56)

This was in Smalltalk. For us, the selector is the method name (or close enough). Let's apply the pattern. First, let's handle our first use case adding integers:

class IntegerNumber{ public function __construct(public int $value) {} public function add($number) { return $number->addInteger($this); } public function addInteger(IntegerNumber $number) { return new IntegerNumber($this->value + $number->value); }}If we run the first test, it should still pass. That's because we're adding two instances of the IntegerNumber class. The receiver of the add() message will call the addInteger on the argument and pass itself to it. At that point, we have two integer primitives, so we can return a new instance summing the primitives.

Now, let's make a similar change to the FloatNumber class:

class FloatNumber{ public function __construct(public float $value) {} public function add($number) { return $number->addFloat($this); } public function addFloat(FloatNumber $number) { return new FloatNumber($this->value + $number->value); }}Our first two tests should be passing now. Nice! Let's now add the cross methods. First, an integer only knows how to add other integers (primitives). Similarly, floats should only know how to add their own primitives. However, integers should be able to convert themselves to floats and vice-versa. This will allow us to add floats and integers together.

When a Float Number instance receives the add() message with an instance of the IntegerNumber class, it will call the addFloat on the argument, and pass itself to it. So we need an addFloat(FloatNumber $number) method on the IntegerNumber class. As we discussed, an IntegerNumber number doesn't know how to sum floats, but it knows how to convert itself to a float. And who knows how to add two floats together? The FloatNumber instance! So, at that point, the IntegerNumber instance will cast itself to Float and call the addFloat() on the float number instance with that. Then, the float number does the primitive addition and returns a new instance of a FloatNumber.

Similarly, when an Integer Number instance receives the add() message with an instance of a FloatNumber class, it will call addInteger on it, passing itself to it. Then, the Float Number will cast itself to an integer and pass that back to the integer calling addInteger. Again, at that point, Integer can do the primitive addition and return a new instance of an IntegerNumber class.

Here's the final solution for both the IntegerNumber and the FloatNumber classes:

class IntegerNumber{ public function __construct(public int $value) {} public function add($number) { return $number->addInteger($this); } public function addInteger(IntegerNumber $number) { return new IntegerNumber($this->value + $number->value); } public function addFloat(FloatNumber $number) { return $number->addFloat($this->asFloat()); } private function asFloat() { return new FloatNumber(floatval($this->value)); }} class FloatNumber{ public function __construct(public float $value) {} public function add($number) { return $number->addFloat($this); } public function addFloat(FloatNumber $number) { return new FloatNumber($this->value + $number->value); } public function addInteger(IntegerNumber $number) { return $number->addInteger($this->asInteger()); } public function asInteger() { return new IntegerNumber(intval($this->value)); }}

It works! Nice. If you're like me, you're now delighted with such a sophisticated implementation.

Isn't this cool?

Example: Star Trek

OK, the numbers example was cool and all, but chances are we're not implementing a language. Is this even useful anywhere else? Well, the important thing about a pattern is the design, not the implementation. You can re-use the same design on different contexts.

Let's say we're building a Star Trek game. We'll control a spaceship and there might be some enemies along the way, so they have to fight. Some enemies will be critical while others will not cause any damage depending on the spaceship.

So we have two hierarchies at play here: Spaceships and Enemies. And the computation of the combat depends on both of them. Perfect use case for the Double Dispatch pattern.

Let's start with a simple case: an asteroid and a space shuttle. The asteroid damages the shuttle, but not critically:

test('asteroid damages shuttle', function () { $spaceship = new Shuttle(hitpoints: 100); $enemy = new Asteroid(); $spaceship->fight($enemy); $this->assertEquals(90, $spaceship->hitpoints);});The implementation would be something like this:

class Shuttle{ public function __construct(public int $hitpoints) {} public function fight($enemy) { $this->hitpoints -= $enemy->damage(); }} class Asteroid{ public function damage() { return 10; }}The test should be green. Nice. Let's add another spaceship. The USS Voyager should not receive any damage from an Asteroid.

test('asteroid does not damage uss voyager', function () { $spaceship = new UssVoyager(hitpoints: $initialHitpoints = 100); $enemy = new Asteroid(); $spaceship->fight($enemy); $this->assertSame($initialHitpoints, $spaceship->hitpoints);});Let's implement our new spaceship:

class UssVoyager{ public function __construct(public int $hitpoints) {} public function fight($enemy) { // Nothing happens. }}Our tests should be green now. Uhm... it looks weird, right? Let's add another enemy and see if it this design still works. Our new enemy is a Borg Cube. Borgs will assimilate any spaceship (resistance is futile).

Let's start with a test for the Shuttle facing the Borg Cube:

test('borg cube critically damages the shuttle', function () { $spaceship = new Shuttle(hitpoints: 100); $enemy = new BorgCube(); $spaceship->fight($enemy); $this->assertSame(0, $spaceship->hitpoints);});Let's implement the Borg Cube enemy:

class BorgCube{ public function damage() { return 100; }}OK, our test should be green. Let's add another test before we refactor this. Borgs will also assimilate the USS Voyager:

test('borg cube critically damages the uss voyager', function () { $spaceship = new UssVoyager(hitpoints: 100); $enemy = new BorgCube(); $spaceship->fight($enemy); $this->assertSame(0, $spaceship->hitpoints);});And... red. Tests are failing. That's because so far nothing damaged the USS Voyager. I think it's time to apply the pattern. First, let's send a message to the enemy, append the spaceship name to the message and pass it along as an argument:

class Shuttle{ public function __construct(public int $hitpoints) {} public function fight($enemy) { $enemy->fightShuttle($this); }} class UssVoyager{ public function __construct(public int $hitpoints) {} public function fight($enemy) { $enemy->fightUssVoyager($this); }} class Asteroid{ public function fightShuttle(Shuttle $shuttle) { $shuttle->hitpoints -= 10; } public function fightUssVoyager(UssVoyager $ussVoyager) { // Does nothing... }} class BorgCube{ public function fightShuttle(Shuttle $shuttle) { $shuttle->hitpoints = 0; } public function fightUssVoyager(UssVoyager $ussVoyager) { $ussVoyager->hitpoints = 0; }}If we extract an Enemy interface here, we would have something like this:

interface Enemy{ public function fightShuttle(Shuttle $shuttle); public function fightUssVoyager(UssVoyager $ussVoyager);}If we add a new enemy to the system, we know we only have to implement the enemy interface and it should Just Work™. Adding a new spaceship? We also need to add it to the enemy interface.

Conclusion

This is not always flowers and sunshine, though. There is a bunch of indirection at play here. The alternative would involve a couple if/switch statements around, so I think it's worth it.

You might think this is similar to the Visitor Pattern, and that's true. The Visitor Pattern solves the problem when Double Dispatch cannot be used (see the Wikipedia for Double Dispatch.) Also make sure to check out this video on the subject.

I had fun writing this piece. And I'm having a lot of fun reading the book. Let me know what you think.

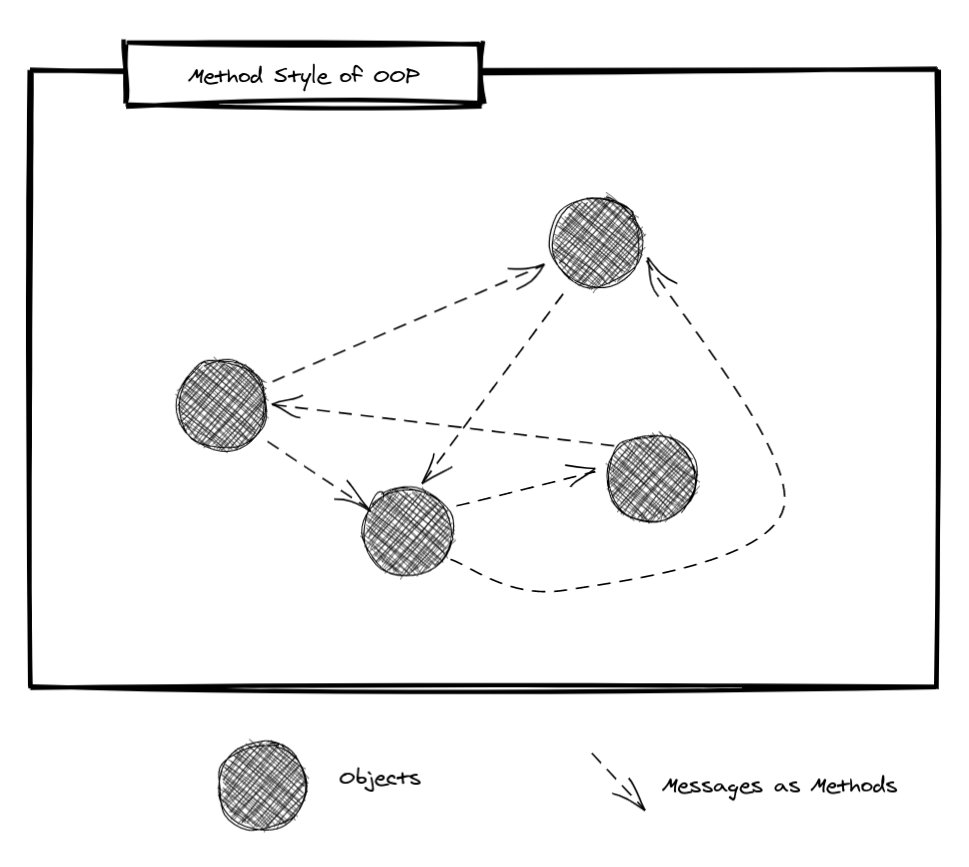

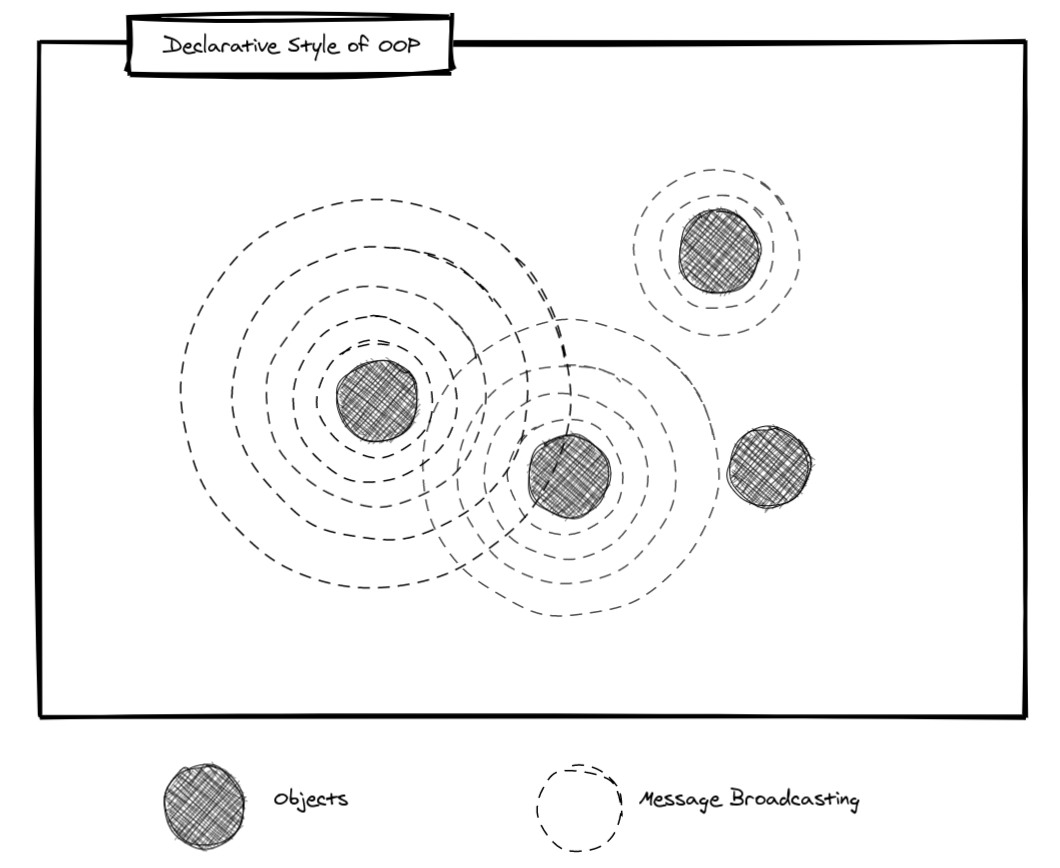

]]>This Actions pattern states that logic should be wrapped in Action classes. The idea isn't new as other communities have been advocating for "Clean Architecture" where each "Use Case" (or Interactor) would be its own class. It's similar. But is it really what OOP is about?

If you're interested in a TL;DR version of this article, here it is:

- Smalltalk was one of the first Object-Oriented Programming Languages out there. It's where ideas like inheritance and message-passing came from (or at least where they got popular, from what I understand);

- According to Alan Kay, who coined the term "Object-Oriented Programming", objects are not enough. They don't give us an Architecture. Objects are all about the interactions between them and, for large scale systems, you need to be able to break down your applications in modules in a way that allows you to turn off a module, replace it, and turn it back on without bringing the entire application down. That's where he mentions the idea of encapsulating "messages" in classes where each instance would be a message in our systems, backing up the idea of having "Action" classes or "Interactors" in the Clean Architecture approach;

Continue reading if this sparks your interest.

What Are Objects?

An object has state and operations combined. At the time where it was coined, applications were built with data structures and procedures. By combining state and operations in a single "entity" called an "object" you give this entity an anthropomorphic meaning. You can think of objects as "little beings". They know some information (state) and they can respond to messages sent to them.

Such messages usually take the form of method calls and this is the idea that got propagated in other languages such as Java or C++. Joe Armstrong, one of the co-designers of Erlang, wrote in the Elixir forum that, in Smalltalk, messages "were not real messages but disguised synchronous function calls", and this mistake was also repeated in other languages, according to him.

One common misconception seems to be on thinking of objects as types. Types (or Abstract Data Types, which are "synonyms" - or close enough - for the purpose of this writing) aren't objects. As Kay points out in this seminar, the way objects are used these days is a bit confusing because it's intertwined with another idea from the '60s: data abstraction (ADTs). They are similar in some ways, particularly in implementation, but its intent is different.

The intent of ADT, according to Kay, was to take a system in Pascal/FORTRAN that's starting to become difficult to change (where the knowledge has been spread out in procedures) and wrap envelopes around data structures, invoking operations by means of procedures in order to get it to be a bit more representation independent.

This envelope of procedures is then wrapped around the data structure in an effort to protect it. But then this new structure that was created is now treated as a new data structure in the system. The result is that the programs don't get small. One of the results of OOP is that programs tend to get smaller.

To Kay, Java and C++ are not good examples of "real OOP". Barbara Liskov points out that Java was a combination of ADT with the inheritance ideas from Smalltalk. To be honest, I can't articulate this difference between ADTs and Objects in OOP quite well. Maybe because I first learned OOP in Java.

One more fun fact about the early days: they were not sure if they were going to be able to implement polymorphism in strongly-typed languages (where the idea of ADT came from), since the compiler would link the types explicitly and nobody wanted to rewrite sorting functions for each different type, for example (Liskov mentions this in the already mentioned talk). As I see it, that's the problem interfaces/protocols and generics solve. In a way, I think of these things as ways to achieve late-binding in strongly-typed languages (and I also think this is true for some design patterns).

Kay doesn't seem to appreciate what this mix of ADT and OOP did to the original idea. He seems to agree with Armstrong. To Kay, Object-Oriented is about three things:

- Messaging (or message-passing);

- Local retention and protection and hiding of state-process (or encapsulation); and

- Extreme late-binding.

These are the traits of OOP, or "Real OOP" - as Kay calls it. The term got "hijacked" and somehow turned into, as Armstrong puts it, "organizing code into classes and methods". That's not what "Real OOP" is about.

Objects tend to be larger things than mere data structures. They tend to be entire components. Active machines that fit together with other active machines to make a new kind of structure.

Kay has an exercise of adding a negation to "core beliefs" in our field to try and identify what these things are really about. Take "big data", for instance. If we add a "not" to it, it says "NOT big data", so if it's NOT about big data, what would it be about? Well, "big meaning", as Kay points out.

If we do that with "Object-Oriented Programming" and add a "not" to it, we get "NOT Object-Oriented Programming", and if it's not about object-orientation, what is it about? Well, it seems to be Messages. That seems to be the core idea of OOP. Even though they were promoting inheritance a lot in the Smalltalk days. And yes, messaging was a big part of it too, but since it was practically "disguised synchronous function calls", they didn't get the main stage when the idea got mainstream.

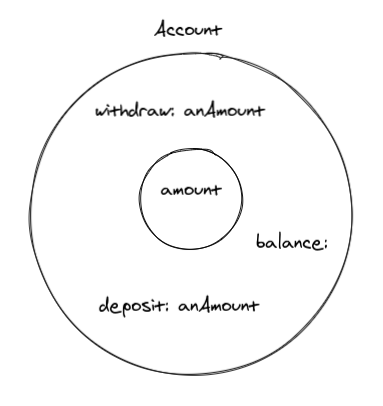

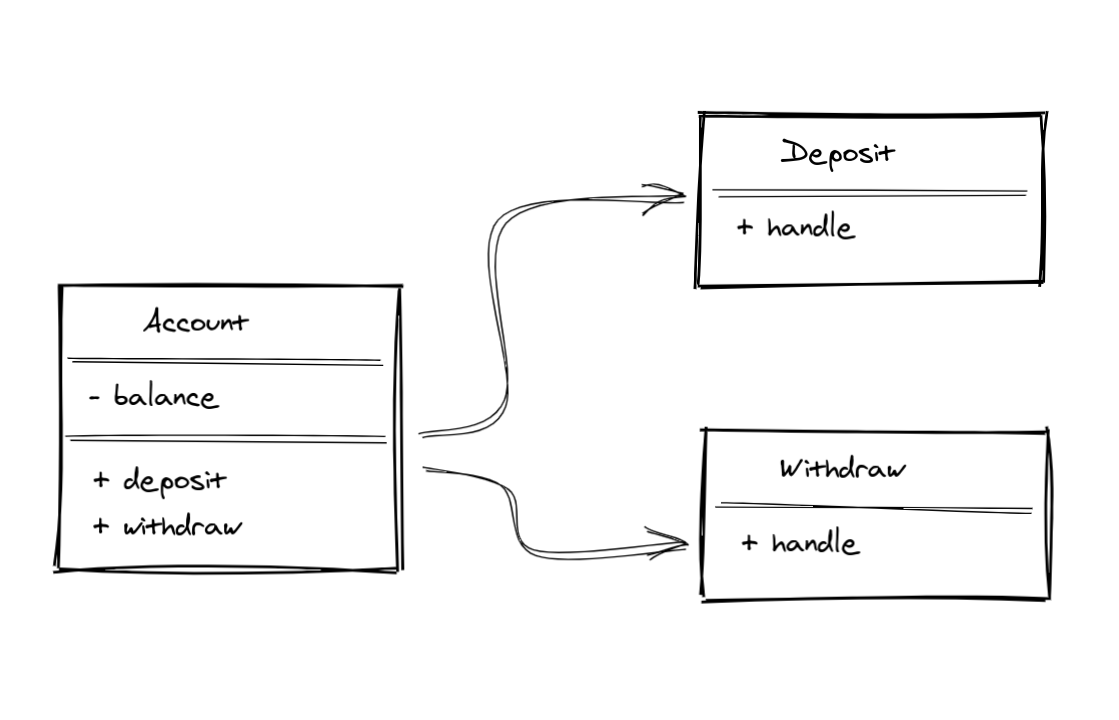

Let's use a banking software as an example. We're going to model an Account. An account needs to keep track of its balance. And it has to be able to handle withdraw, as long as the amount requested is less than the current balance amount. It also has to be able to handle deposits. The image below is a visual representation of what an Account object could be. Well, at least a simplification of that.

There are some guidelines on how to identify objects and methods in requirements: "nouns" are good candidates for "objects", while "verbs" are good candidates for "methods". That's only a guideline, which means they are "good defaults", but not hard rules.

Reification

OOP is really good at modeling abstract concepts. Things that are not tangible, but we can pretend they exist in the reality we're trying to build inside our software. They are objects (or "little beings"). The term Reification means to treat immaterial things as they were material. We use that all the time when we're writing software, especially in Object-Oriented Software. Our Account model is one example of reification.

It happens to fit the "noun" and "verb" guideline, because that makes sense in our context so far. Here's a simple example of a deposit:

class Account extends Model{ public function deposit(int $amountInCents) { DB::transaction(function () { $this->increment('balance_cents', $amountInCents); }); }}Notes on Active Record

The code examples are done in a Laravel context. I'm lucky enough to happen to own the databases I work with, so I don't consider that an outer layer of my apps (see this), which allows me to fully use the tools at hand, such as the Eloquent ORM - an Active Record implementation for the non-Laravel folks reading this. That's why I have database calls in the model. Not all classes in my domain model are Active Record models, though (see this). I recommend experimenting with different approaches so you can make up your own mind about these things. I'm just showing an alternative that I happen to like.

But that's not the end of the story. Sometimes, you need to break these "rules", depending on your use case. For instance, you might have to keep track of every transaction happening to an Account. You could try to model this around the relevant domain methods, maybe using events and listeners. That could work. However let's say you have to be able to schedule a transfer or an invoice payment, or even cancel these if they are not due yet. If you listen closely, you can almost hear the system asking for something.

Knowing only its balance isn't that useful when you think of an Account. You have 100k dollars on it, sure, but how did it get there? These are the kind of things we should be able to know, don't you think? Also, if you model everything around Account, it tends to grow to a point of becoming God objects.

This is where people turn to other approaches like Event Sourcing. And that could be the answer, as the primary example for it is a banking system. But there is an Object-Oriented way to model this problem.

The trick is realizing our context has changed. Now, we need to focus on the transactions happening to the account (only "withdraw" and "deposit" for now). They deserve the main stage in our application. We will promote these operations to objects, calling them transactions. And those objects can have their own state. The public API of the account wouldn't change, only its internals.

Instead of simply manipulating the balance state, the Account object will create instances of each transaction and also keep track of them internally. But that's not all. Each transaction has a different effect on the account's balance. A deposit will increment it, while a withdraw will decrement it. This serves as an example for another important concept of Object-Oriented Programming: Polymorphism.

Polymorphism

Polymorphism means: multiple forms. The idea is that I can build different implementations that conform to the same API (interface, protocol, or duck test). This fits exactly our definition of the different transactions. They are all transactions, but with different application on the Account. When modeling this with ActiveRecord models, we could have the following:

- An Account (AR model) holds a sorted list of all transactions

- A Transaction would be an AR model and would have a polymorphic relationship called "transactionable"

- Each different transaction would conform to this "transactionable" behavior

The trick would be to have the Account model never touching its balance directly. The balance field would almost serve as a cached value of the result of every applied Transaction of that account. The Account would then pass itself down to the Transaction expecting the transaction to update the balance. The Transaction, internally, would then delegate that task to each transactionable and they could update the balance. It sounds more complicated than it actually is, here's the deposit example:

use Illuminate\Database\Eloquent\Model; class Account extends Model{ public function transactions() { return $this->hasMany(Transaction::class)->latest(); } public function deposit(int $amountInCents) { DB::transaction(function () use ($amountInCents) { $transaction = $this->transactions()->create([ 'transactionable' => Deposit::create([ 'amount_cents' => $amountInCents, ]), ]); $transaction->apply($this); }); }} class Transaction extends Model{ public function transactionable() { return $this->morphTo(); } public function setTransactionableAttribute($transactionable) { $this->transactionable()->associate($transactionable); } public function apply(Account $account) { $this->transactionable->apply($account); }} class Deposit extends Model{ public function apply(Account $account) { $account->increment('balance_cents', $this->amount_cents); }}As you can see, the public API for the $account->deposit(100_00) behavior didn't change.

This same idea can be ported to other domains as well. For instance, if you have a document model in a collaborative text editing context, you cannot rely on having a single content text field holding the current state of the Document's content. You would need to apply a similar idea and keep track of each Operation Transformation happening to the document instead.

Another example could be an PaaS app. You have provisioned servers and you can deploy on them. With only this short description one could model it as $server->deploy(string $commitHash). But what if the user can cancel a deployment? Or rollback to a previous deployment? That change in requirements should trigger your curiosity to at least experiment promoting the deploy to its own Deployment object or something similar.

I first saw this idea presented by Adam Wathan on his Pushing Polymorphism to the Database article and conference talk. And I also found references in the book Smalltalk, Objects, and Design, as well as on a recent Rails PR done by DHH introducing delegated types. I find it really powerful and quite versatile, but I don't see that many people talking about it, so that's why I found it relevant to mention here.

Before we wrap up this Reification tangent, there's one more example I wanted to mention. When you have two entities collaborating on a behavior and the logic doesn't quite fit one or the other. Or the behavior could perfectly fit either of these entities. For instance, let's say you have a Student and a Course model and you want to keep track of their presence and grade (assuming we only have a single presence valeu that can either be present | absent and single grade value ranging from 0 to 10). Where do we store this data?

It should feel like it doesn't belong in the Course, nor in the Student records. It almost feels like the solution to this problem could be to give up on OOP entirely and use a function that you could pass both objects to. Or we could maybe store that value as a pivot field in a joint table. Instead, if we reify this problem, we could promote the Student/Course relationship to an Object called StudentCourse. That would make the perfect place to store the grade and presence. These are examples of reification.

Abstractions as Simplifications

I've talked about this idea before. I have a feeling that some people see abstractions as convoluted architectural decisions and as a synonym for "many layers", but that's not what I understand of abstractions. They are really simplifications.

Alan Kay has a good presentation on the subject and he states that we achieve simplicity when we find a more sophisticated building block for our theories. A model that better fits our domain and things "just make sense".

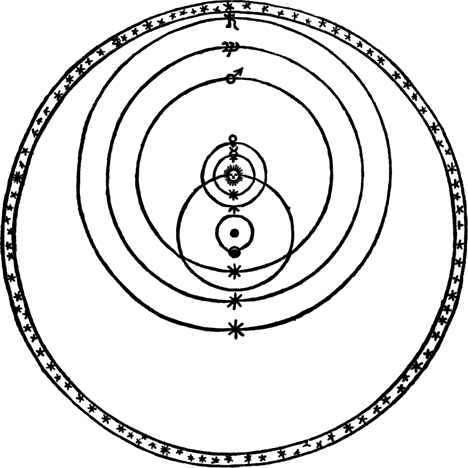

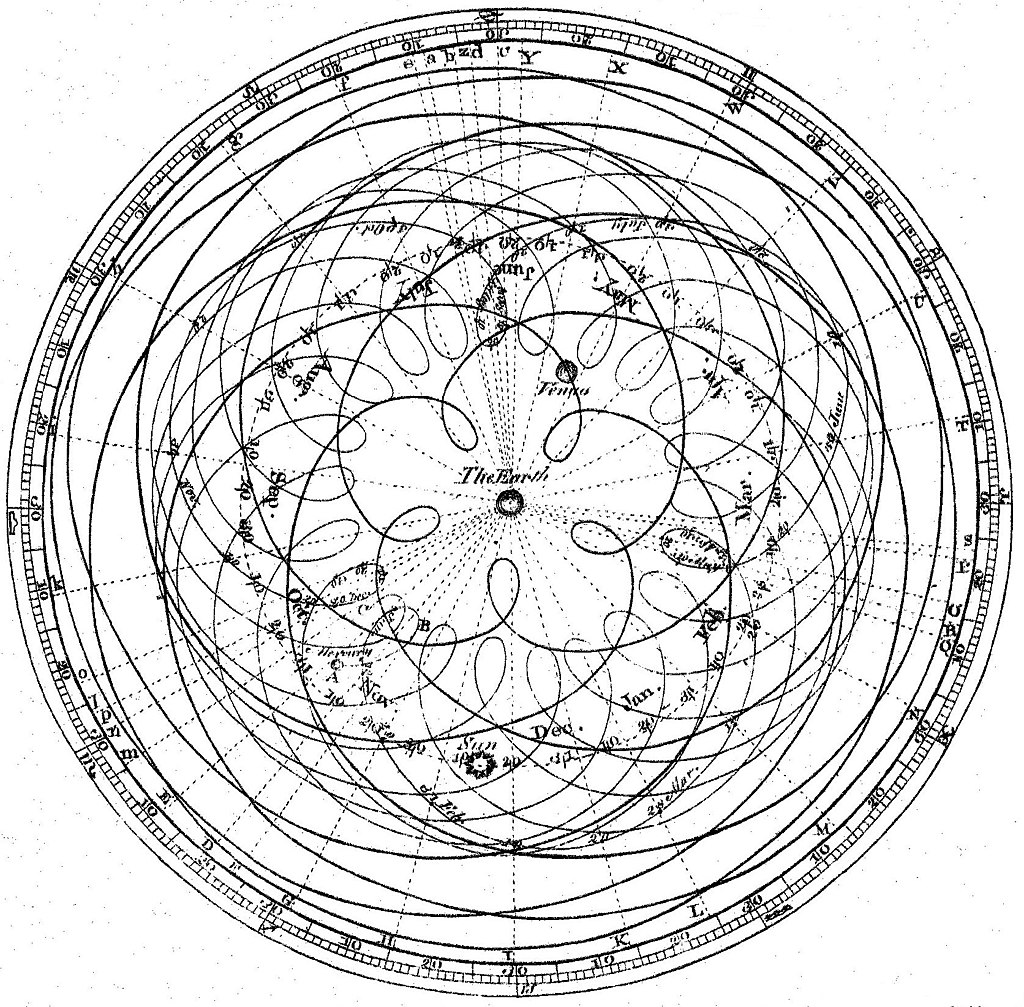

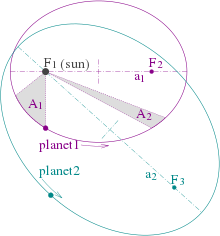

The example of Kepler and the elliptical orbit theory that Kay uses is really good (read more about it here). At that time, there was a religious belief that planets moved in "perfect circles", where the Sun was orbiting the Earth while other objects were orbiting the Sun.

Source: NASA's Earth Observatory (link)

That didn't quite make sense because objects seemed to be in different positions depending on the day (among other problems), so they built a different theory where the orbits were still "perfect circles" but the objects were not going round, but instead moving in a way that at a macro level also built another "perfect circle", something like this:

Source: Wikipedia page on "Deferent and epicycle" (link)